UN Warns: AI-Powered Killer Robots Pose Deep Ethical and Legal Threats to Humanity

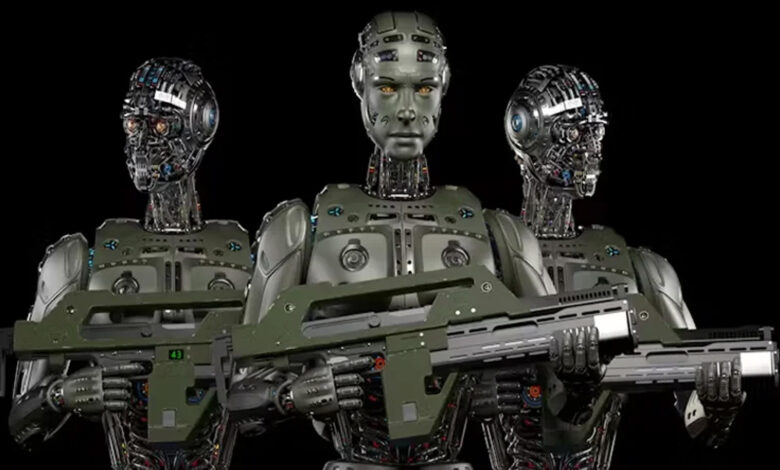

New York:The rise of **artificial intelligence in modern warfare**, particularly the use of AI-powered drones and autonomous weapons, is sounding alarms across international institutions, with the **United Nations (UN)** urging swift and binding regulations to curb what it calls a potential **“digital dehumanization”** of war.

In a recent report published on the UN’s official website, **global policymakers** are increasingly concerned about a future where **algorithms could decide matters of life and death**, potentially targeting both soldiers and civilians without direct human oversight. This shift raises **severe ethical and legal questions**.

### Autonomous Warfare: A Disturbing Future

Where once advanced drones were exclusive to wealthy nations, **low-cost modifications** have now made deadly drone technology more accessible, leading to its proliferation worldwide. These drones, paired with **AI-driven decision-making**, are transforming the nature of conflict—prompting critics to label them **“killer robots.”**

UN Secretary-General **António Guterres** has long maintained that **machines should never have the power to take human lives**, a sentiment echoed by **Izumi Nakamitsu**, the UN’s Disarmament Chief, who insists that granting machines the authority to select and engage military targets is **unacceptable under international law**.

### Human Rights Watch: A Digital Ethical Collapse

The **Human Rights Watch (HRW)** calls the use of fully autonomous weapons one of the most extreme forms of **digital dehumanization**. **Mary Wareham**, Director of HRW’s Arms Division, warned that well-funded nations such as **the U.S., Russia, China, Israel, and South Korea** are heavily investing in autonomous weapons powered by artificial intelligence—on land, sea, and in the air.

While proponents argue that **AI systems are less emotional and more precise** than human soldiers, critics say this **ignores major flaws**: machines are prone to **errors, bias**, and lack the **moral judgment** required in combat situations.

### Who Is Responsible for War Crimes by Machines?

A central dilemma is **accountability**. If an AI-driven system mistakenly targets civilians—perhaps misidentifying a wheelchair or prosthetic limb as a weapon—**who bears responsibility?** Is it the developer, the programmer, or the military command? The campaign coalition **Stop Killer Robots** argues this accountability vacuum is one of the **gravest ethical risks** of AI warfare.

Nations are now under pressure. In **informal talks this month at the UN headquarters**, Secretary-General Guterres urged member states to **finalize a legally binding treaty** by next year that bans or strictly regulates autonomous weapons systems before they become an irreversible part of modern arsenals.

As AI continues to infiltrate domains like **law enforcement, border surveillance, and criminal profiling**, the call for **ethical boundaries and legal frameworks** grows louder. The international community must now decide: **Should machines have the power to kill?** And if not, **how do we stop them—before it’s too late?**